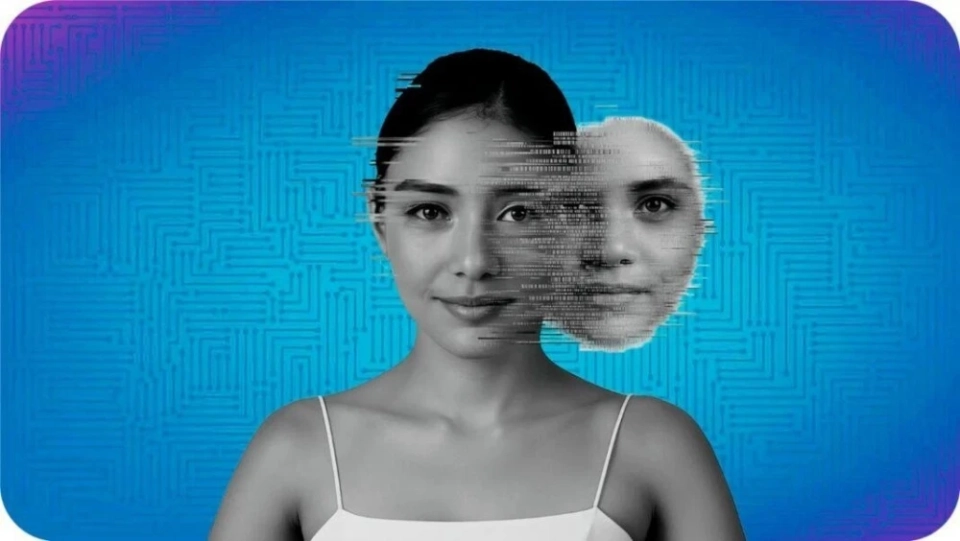

A new regulatory act titled "The Fundamental Law on the Development of Artificial Intelligence and the Formation of a Foundation for Trust" has come into effect, as reported by Kazinform, citing information from Yonhap. This law will establish a legal framework to combat misinformation and other threats arising in the process of AI development.

The Ministry of Science of South Korea announced that the government has developed extensive guidelines for the use of AI technologies at the state level. The law aims to increase the accountability of developers and companies for detecting and preventing the use of deepfakes and misinformation generated by AI. Authorities have also been granted powers to conduct inspections and impose fines for violations.

The law introduces a new definition of "high-risk artificial intelligence," which encompasses AI models that impact vital areas such as employment, credit assessment, and medical consultations. These models can significantly affect the safety and daily lives of individuals.

Organizations using high-risk AI technologies must inform users that their services are based on AI and are responsible for ensuring the safety of these solutions. All materials created using AI must have watermarks indicating their artificial origin.

"Labeling AI content is a necessary step to prevent negative consequences, such as the creation of deepfakes," emphasized a ministry representative.Companies providing AI services in South Korea that meet at least one of the following criteria: annual revenue exceeding 1 trillion won (approximately $681 million), domestic sales of 10 billion won or more, or more than 1 million active users per day, must appoint a representative in the country. Currently, companies such as OpenAI and Google comply with these requirements.