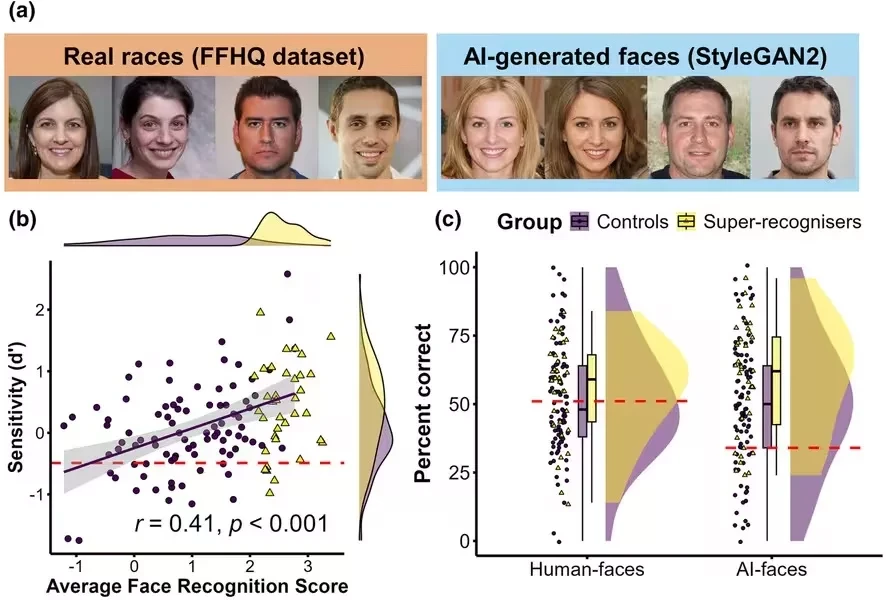

Such neural networks are capable of generating faces that increasingly pass human visual checks. Sometimes people not only confuse generated images with real ones but also consider them more "plausible" compared to regular photographs. A study conducted by a group of scientists indicates that artificial intelligence creates not individual faces but statistically averaged images.

During the study, the research team analyzed how people perceive generated portraits and why most of them are unable to distinguish them from real ones. The focus was on a group of "super-recognizers"—individuals with exceptional skills in recognizing and remembering faces.

The study involved 36 such experts and 89 volunteers who showed high results in perception tests. Participants were asked to view 200 images, half of which were created by a neural network, and the other half were real photographs. All images were selected to not differ in gender, facial expression, and other key parameters.

The results were quite revealing. Ordinary participants struggled to distinguish "fakes" from originals, with their accuracy close to random guessing. Super-recognizers demonstrated significantly better results, but even their accuracy reached only 57%.

This indicates that the task remains challenging even for specialists.

Researchers also identified an interesting pattern: the better a person recognizes real faces, the more effectively they identify artificial ones. There is a stable connection between these skills, suggesting that the recognition of AI-generated portraits is based not on searching for technical flaws but on fundamental mechanisms of face perception.

An interesting effect is observed in group decisions. When eight super-recognizers combined their assessments, accuracy significantly increased. In the control group, the "wisdom of the crowd" did not manifest, highlighting that experts possess a developed sense of self-confidence and the ability to more accurately assess their own mistakes.

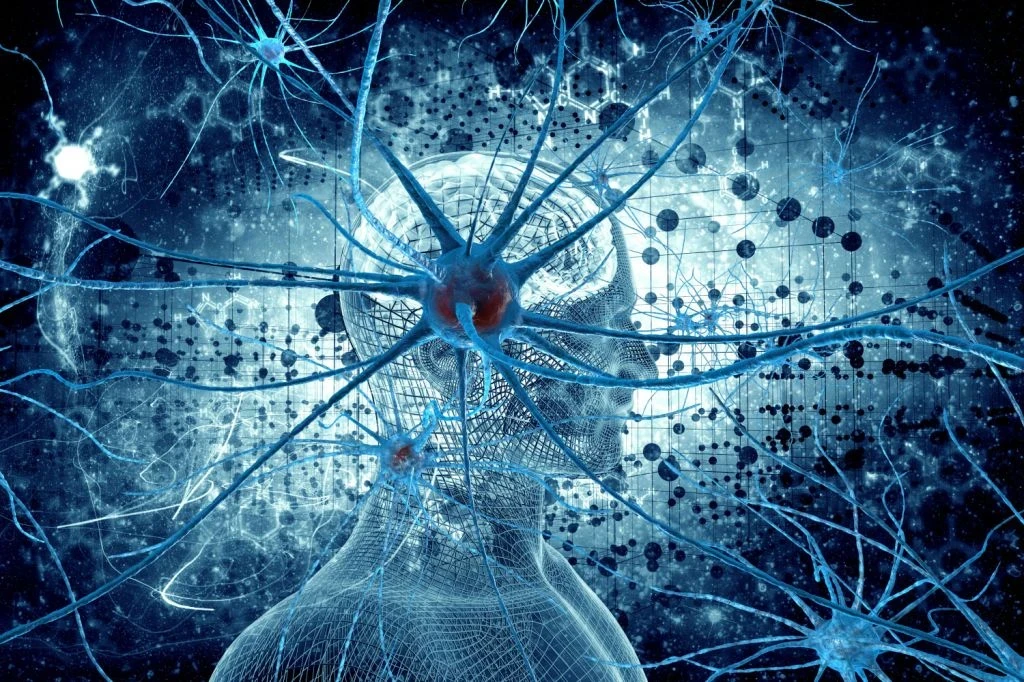

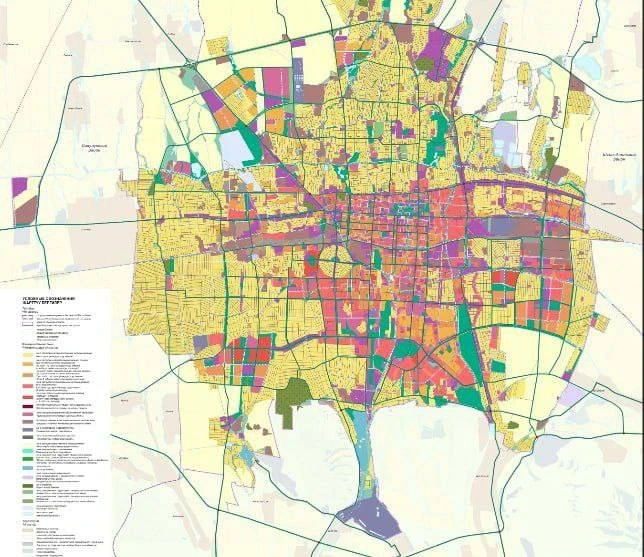

To understand the reasons for the differences, scientists analyzed the images themselves using neural networks trained to recognize faces. This allowed them to create a map of the "face space"—a multidimensional model in which each face is represented by a set of characteristics.

It turned out that real faces are distributed unevenly and diversely in this space, differing in many small unique details. In contrast, generated images are concentrated closer to the center—in the area of the "average" face.

In other words, AI tends to create maximally averaged, statistically typical portraits. The effect observed in this study has been termed "hyper-averageness." It arises from the principles of generative models, which consciously suppress rare and unstable features while amplifying the most common ones. Thus, the result is not a specific person but a kind of idealized portrait with minimal deviations from the norm.

Paradoxically, this is what makes AI-generated faces convincing. In reality, most people possess unique combinations of features that rarely occur together. Such faces do not conform to statistical norms. And the neural network creates images that appear more harmonious and "correct" than real people.

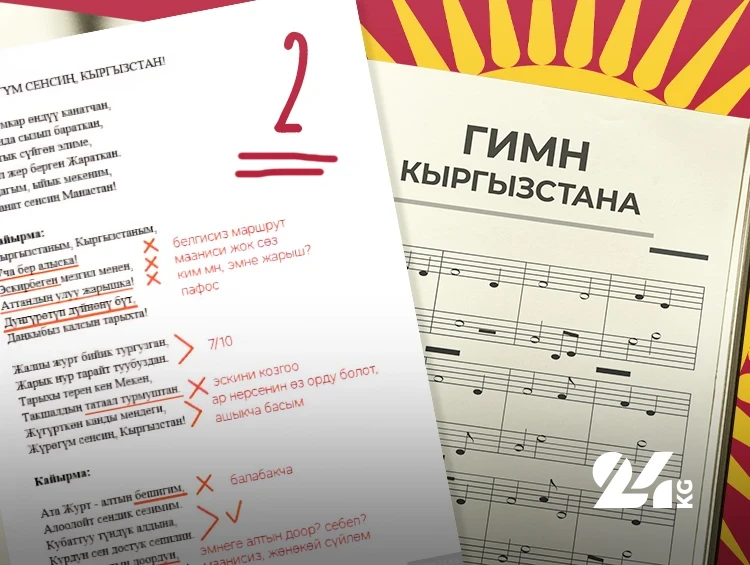

As the analysis showed, super-recognizers intuitively grasp this feature. They focus not on the attractiveness, youth, or emotionality of faces but on their "similarity to the averaged model." This factor helps them distinguish generated images from real ones.

Ordinary observers, in turn, often rely on superficial impressions: how alive, likable, or "socially active" a face appears. These parameters turn out to be ineffective indicators of authenticity and hinder recognition.

Nevertheless, experts cannot clearly explain how they make decisions. Their approach is intuitive and formed at the level of unconscious experience.

The authors of the study emphasize that even the best observers face the limits of their capabilities. With the development of generative models, tasks will become increasingly complex.

The results of the study are significant for various fields. Scientists warn that the use of AI-generated faces in psychological experiments, educational processes, or legal proceedings can distort perception and influence decision-making. These images are not neutral and are systematically biased towards the "ideal norm."

In the future, researchers suggest developing hybrid detection systems that combine algorithms and human expertise. Computers will be able to analyze statistical patterns, while specialists will interpret complex borderline cases. The ability to notice subtle deviations from the norm may become an important skill in the digital age. The study concludes that identifying "fakes" is not only a technological challenge but also a question of adapting human perception to new realities.