Asking a health advice from a chatbot? After the recent launch of OpenAI's ChatGPT Health service, there are several important factors to consider.

With the launch of ChatGPT Health by OpenAI, several important aspects arise that should be considered before turning to AI chatbots for medical advice.

Given that millions of users are already seeking advice from chatbots, it was only a matter of time before tech companies began developing specialized solutions for health-related inquiries.

In January, OpenAI introduced an updated version of its chatbot — ChatGPT Health, which, according to the company, is capable of analyzing medical records and data from wearable devices to provide answers to medical questions.

Currently, access to the program is by appointment only. Competing company Anthropic also offers similar features for users of its chatbot Claude.

Both companies emphasize that their developments are large language models that cannot replace qualified medical care and should not be used for diagnosis. Instead, chatbots are capable of summarizing complex test results, helping prepare for doctor visits, and identifying significant changes in health status based on medical records.

However, how safe and accurate are these technologies in analyzing health data? Is it really worth relying on them?

Here are a few points to consider before discussing your health with AI:

Personalized information from chatbots may surpass Google

Some specialists who have worked with ChatGPT Health and similar platforms consider them a significant step forward in the field of medical information.

Although AI systems are not perfect and can sometimes provide incorrect advice (source in English), they often offer more personalized and specific responses than Google search results.

“Often, the patient has no alternative, or they are guessing,” notes Dr. Robert Wachter, a medical technology expert from the University of California, San Francisco. “Therefore, if these tools are used responsibly, they can provide real benefits.”

In countries like the UK and the US, where waiting times for doctor appointments can last for weeks and patients can wait hours in emergency rooms, chatbots help avoid unnecessary panic and save time.

Moreover, they can indicate the need for immediate medical attention if symptoms are dangerous.

One of the advantages of new chatbots is their ability to take into account users' medical histories, including current medications, age, and medical records.

Even if you do not share your medical data, Wachter and other experts advise providing as many details as possible to make the responses more accurate.

Do not turn to AI for alarming symptoms

Wachter and his colleagues emphasize that in some cases, it is necessary to seek medical help immediately rather than rely on a chatbot. Symptoms such as shortness of breath, chest pain, or severe headaches may indicate serious health issues.

Even in less critical situations, both patients and doctors should approach AI programs with caution, notes Dr. Lloyd Minor from Stanford University.

“When it comes to serious medical decisions or even less significant health issues, one cannot rely solely on the information provided by large language models,” adds Minor, dean of Stanford's medical school.

Even in the case of common conditions like polycystic ovary syndrome, it is better to consult a real doctor, as the disease can manifest differently in different people, affecting treatment choices.

Consider privacy when uploading health data

Many of the benefits offered by AI bots depend on users sharing personal medical information. It is important to remember that all data transmitted to the company developing the AI is not protected by federal privacy laws in the US that regulate the handling of sensitive medical data.

The law, known as HIPAA, imposes fines and criminal penalties on doctors, hospitals, and other medical institutions for disclosing medical information. However, it does not apply to companies creating chatbots.

“When a person shares their medical record with a model, it is not the same as sharing it with a doctor,” explains Minor. “Consumers should understand that the privacy standards in these cases are completely different.”

OpenAI and Anthropic claim that users' health data is stored separately and protected by additional measures. The companies do not use medical information to train their models. Users must separately consent to the sharing of such data and can withdraw their consent at any time.

While interest in AI is high, independent research on such technologies is still in its early stages. Initial results show that programs like ChatGPT perform well on complex medical exams but often struggle in interactions with live people.

A recent study by the University of Oxford involving 1,300 participants showed that those who used AI chatbots to seek information about hypothetical diseases did not make more informed decisions than those who relied on traditional online searches or their own judgments.

When chatbots were presented with medical scenarios in written form, they correctly identified the underlying condition 95% of the time.

“That was not the problem,” comments lead author Adam Mahdi from the University of Oxford. “The issues arose during interactions with real people.”

Mahdi and his team identified several difficulties in communication. People often did not provide chatbots with enough information for accurate assessment of the problem, and the systems frequently gave a mix of correct and incorrect answers, making it difficult for users to distinguish between them.

The study conducted in 2024 did not cover the latest versions of chatbots, including ChatGPT Health.

An AI second opinion can be helpful

The ability of chatbots to ask clarifying questions and extract key information from users is an area where, according to Wachter, there is still much room for improvement.

“I believe they will become truly effective when their communication with patients is more ‘medical’ and the dialogue resembles a real conversation,” says Wachter.

In the meantime, one way to increase confidence in the information received is to consult multiple chatbots, just as patients sometimes seek a second opinion from another doctor.

“Sometimes I enter the same data into ChatGPT and Gemini,” shares Wachter, referring to Google’s AI tool. “And when their responses match, I feel more confident that it is the correct answer.”

The record Asking for health advice from a chatbot? Keep in mind a few important factors after the launch of ChatGPT Health by OpenAI was published on K-News.

Read also:

The Cabinet of Ministers updated the registers of the Ministry of Construction: paid services for the registry of construction projects and confirmation of work completion are being introduced.

- On December 25, 2025, the Cabinet of Ministers issued Resolution No. 832, which introduced...

The Trump Administration Ordered Military Contractors and Federal Agencies to Cease Cooperation with Anthropic

Donald Trump stated in his message on Truth Social that government agencies, including the...

In Kyrgyzstan, they want to launch a climate education program by 2030.

The Ministry of Science, Higher Education and Innovations of the Kyrgyz Republic has initiated...

Driver reform, words of Zhapikeev, visas, prices, GIC. What was January 2026 like?

January 2026 became particularly lengthy for the residents of Kyrgyzstan. It seems that not days,...

Anthropic accused Chinese companies of stealing Claude's data

Anthropic has accused a number of Chinese artificial intelligence developers, such as DeepSeek,...

Problems of Diagnosing Hip Joint Dysplasia in Kyrgyzstan. Archival Interview with Kasymbek Tazabekov

In Kyrgyzstan, orthopedic diseases remain one of the key medical problems. According to the...

AI can change your political views

Recent studies have shown that a brief conversation with a trained chatbot was four times more...

"Digital Control from Import to Patient." At what stage is the drug traceability system in Kyrgyzstan now?

Over the past few years, an Electronic Database of Medicines and Medical Devices (EDB) has been...

The Cabinet allowed the branding of livestock during microchipping: the "i" mark will be applied with liquid nitrogen

Curl error: Operation timed out after 120000 milliseconds with 0 bytes received...

AI Bots Have Their Own Social Network: What You Need to Know About Moltbook

Closed to humans: in the new social network for AI bots, they can interact, spend time, and share...

Tokayev: Kazakhstan has entered a new stage of modernization

Curl error: Operation timed out after 120001 milliseconds with 0 bytes received...

Aeon: How the Conquest of Foreign Territories Came to Be Considered Unacceptable

Author: Cary Goettlich In the modern world, there are fewer and fewer things that evoke consensus...

Trump's World Council Breaks Through the BRICS Wall

The criticism of the World Council cannot hide the ambitious design of this initiative. Despite...

Game with Worlds: New Technologies Will Cancel Out Clip Attention

As the co-founder of Chima, a research lab in applied AI, I actively contemplate the concept of...

Humanity is Not Ready. Uncontrolled Development of AI Poses Catastrophic Risks

Photo from the internet. Dario Amodei, CEO and co-founder of Anthropic In one of its essays, the...

Non-Combat and Unrecognized: Suicides in the Ukrainian Army That Are Silent

This is a translation of an article from the Ukrainian service of the BBC. The original is...

TikTok collects user data even if they don't use the app. BBC explains how to protect your privacy

TikTok actively collects information about its users, which is common practice for apps; however,...

Users are Massively Deleting ChatGPT: What is the Reason?

The material is prepared by K-News. Copying or partial use is only possible with the permission of...

The J-1 Cultural Exchange Program in the USA has turned into a scheme for profiting from and exploiting foreign interns, - The New York Times

Another scheme involved employing close relatives of the CEO, which brought his family over 1...

Mongolia Joined the Forum on Geostrategic Interaction in the Resource Sector (FORGE)

According to information presented by MiddleAsianNews, the United States, together with allies and...

The Ministry of Education proposes to enhance the work of the online school "Tunguch" with three new areas.

The government of Kyrgyzstan will receive for public discussion a draft resolution on the pilot...

Casino Only for Foreigners: Kazakhstan Tries to Repeat the Singapore Trick

Kazakhstan is striving to replicate Singapore's successful experience in creating gambling...

AI Chatbots Distort Users' Reality — Study

A study by analysts at Anthropic has shown that in rare but significant cases, interactions with...

In Davos, the heads of Microsoft, Anthropic, and Google DeepMind shared their views on the future of AI and expressed concerns about its risks.

At the World Economic Forum in Davos in 2026, key figures from Microsoft, Anthropic, and Google...

Russian and Ukrainian Drone Manufacturers Buy Components from the Same Chinese Companies

According to The Financial Times, Russian and Ukrainian drone manufacturers are using the same...

Cells, Humans, and AI Think Alike — A Group of Scientists Discovered a Common Algorithm

What do a developing embryo, an ant colony, and the latest version of ChatGPT have in common? At...

How "Eurasia" is Changing the Daily Lives of Millions in Kyrgyzstan

Curl error: Operation timed out after 120001 milliseconds with 0 bytes received...

"War Will Change Beyond Recognition." Colonel of the General Staff of Russia — on the Lessons of Military Actions in Ukraine, Changes in the Army, and the Weapons of the Future

The conflict in Ukraine has not only become a catalyst for changes in the military sphere but has...

The sister of Chinghiz Aitmatov addressed the people of Kyrgyzstan (text of the address)

In her address, Roza Torokulovna touches on the topic of malicious rumors and accusations that are...

Do we need clinical bases to train a good doctor? Explanation by Professor T. Batyraliev

In the Kyrgyz Republic, the reform of the medical personnel training system is becoming one of the...

How Big Data is Changing Forecasts: Analytics, Algorithms, and Trends for 2026

Transformation of Forecasting with Big Data in 2026...

Xi Jinping is conducting purges in the general staff. What does this mean for China and its neighbors, including Central Asia?

Over the weekend, General Zhang Youxia, who held the position of Vice Chairman of the Central...

Why Did Trump Invite Only Kazakhstan and Uzbekistan from Central Asia to the "World Council"? Opinions

On January 16, U.S. President Donald Trump announced the creation of a new international structure...

Zelensky spoke about the 20 points of the peace plan. What's new in it?

President of Ukraine Volodymyr Zelensky presented a draft agreement for ending the war, which was...

City Against Dogs: Culling Animals - What Is It: Corruption, Inhumanity, or a Solution to Problems?

How the issue of street animals is being addressed in Bishkek Events related to stray animals...

Voting - a right or a duty? The controversial bill of the deputy

Deputy Marlen Mamataliyev has proposed making participation of citizens of Kyrgyzstan in elections...

Arsen Askerov: The training of doctors in obstetrics, gynecology, and pediatrics needs to be significantly strengthened

Doctor of Medical Sciences and President of the Kyrgyz Association of Obstetricians, Gynecologists,...

Tokaev gave a major interview to the Turkistan newspaper. It covers reforms, AI, nuclear power plants, Nazarbayev, and much more.

President Kassym-Jomart Tokaev shared his views on current challenges and achievements in his...

Rector Kudaiberdi Kozhobekov: OshSU - the flagship of higher education in Kyrgyzstan

In this interview with the rector of Osh State University, Kudayberdi Kozhobekov, we discuss key...

ChatGPT will determine users' age

The company OpenAI has announced a new update for ChatGPT that will allow it to determine the age...

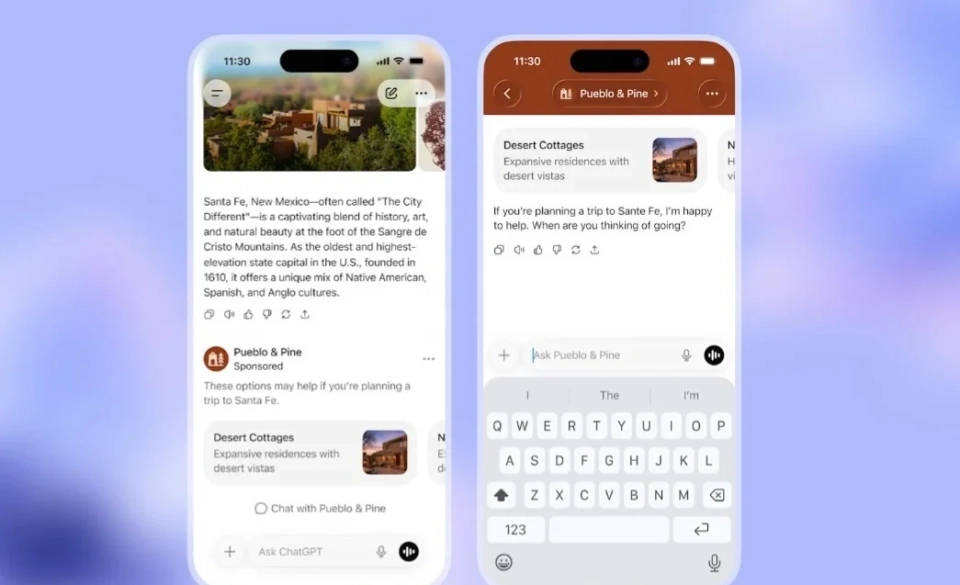

OpenAI Launches Ads in the Free Version of ChatGPT

The company OpenAI has announced the start of testing advertisements in its product ChatGPT. Ads...

TikTok tracks you even if you don't use the app

It turns out that TikTok collects confidential and even compromising data about users, even if...

Mongolian Engineer at Audi, Designer of Future Concept Cars Audi Activesphere and Audi Concept C

DAVAADORJ Battugs According to information provided by MiddleAsanNews, Davaadorj Battugs is an...

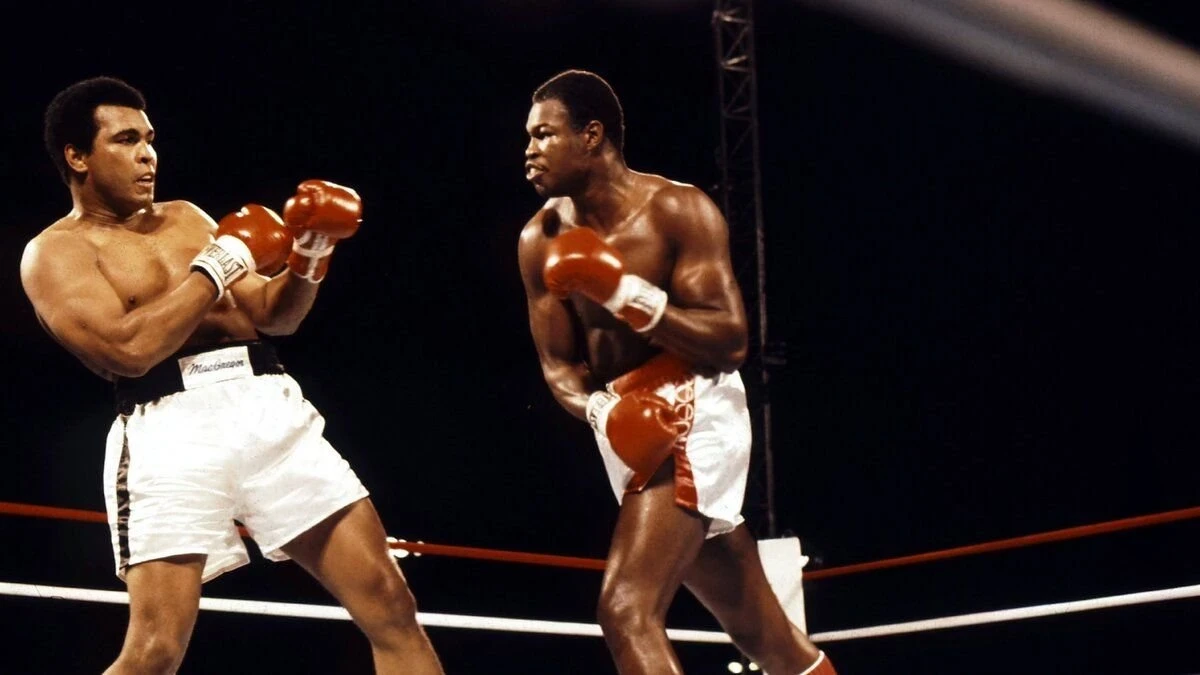

Laboratory Study: The Myth that Boxing Caused Muhammad Ali's Parkinson's Disease

The question of whether boxing caused Muhammad Ali's Parkinson's disease sparks heated...

"Her Husband Could Never Accept That Our Daughter Is Sick." The Story of a Single Mother and Her Daughter

Begimai Toktosunova, being a single mother, faced enormous difficulties after her divorce when the...

Our People Abroad: Kyrgyz Woman Zhipara Kamalidin Kyzy Confused "Gichim" with "Kimchi" in Korea and Instead of 10,000 Won Gave "10 Som"

Turmush continues to introduce readers to Kyrgyzstani people who have found new places of residence...

Sadyr Japarov spoke at the IV People's Kurultai (text of the speech)

Today, December 25, the President of Kyrgyzstan, Sadyr Japarov, addressed the people, the deputies...

Askhat Alagozov: Sadyr Japarov Does Not Distribute Money on Social Media

Citizens are urged to be vigilant Askhat Alagozov, the press secretary of the president, reported...